BACK TO MORE

a general idea of things

Feb 20, 2026

A few notes about the history behind "Young Men in Suits," the second single from In All Directions, releasing February 25. Subscribe for release updates, if you like.

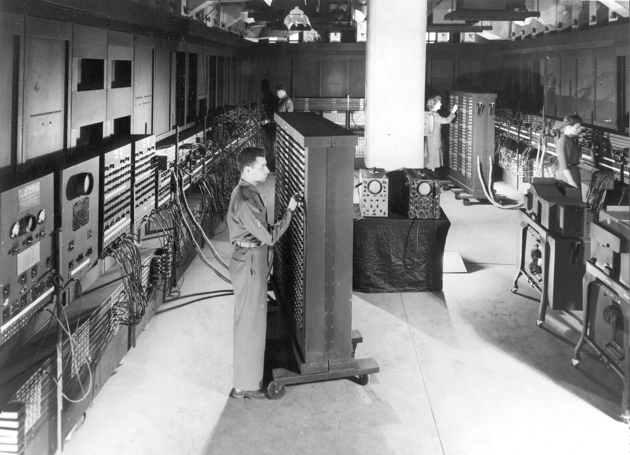

Around the middle of the Twentieth Century, the first digital electronic computers were whirring and wheezing to life. It's well known that these were bulky, sprawling things. Big enough to fill a room, they were more like the groaning mechanical beasts of the previous century than the sleek, glassy devices that would proliferate in the century to come. But they were also fickle, delicate, and brittle, their processing capacities comprised of thousands of fragile glass vacuum tubes that often blew out or simply shattered.

Computers of various kinds had been built before. But what set this new class of machine apart was the fact that they were designed to be general purpose computers, capable of processing anything and everything, of ingesting the whole world. But making good on this ambition required first that the world be radically simplified, its plenty cut up and reduced down to two discrete and separable states: off or on, yes or no, one or zero.

Punched into thousands of slips of card stock, this barely-there language allowed the new machines to transform the world and the things in it into crisp numerical outputs. Their human tenders could then turn these outputs into models, simulations, and other instruments for analyzing, making predictions about, and better controlling worldly things and ways.

This is to say that there was more at stake in this moment than the making of a new kind of machine. Computation was coming into focus not just as a technical process that happened inside of specific kinds of devices but also as an elaborate system for conceptualizing, representing, generating knowledge about, and acting upon the world. This system entailed strange new theories about what something was and wasn't, about the relationship between information, knowledge, and meaning, and about what mattered for projects of analysis and control.

In this system, things weren't really things at all. Instead, they were imagined as interlinked informational processes distributed in time; quantifiable measures of uncertainty within a system, reducible to a series of yes/no decisions and if/then propositions. For the purposes of computation, then, there wasn't much difference between unlike things. Reimagined as informatic process, all things became amenable to the same tools and methods of analysis. Computation in this sense became a potent solvent that ate away at the boundaries between types and kinds, inspiring fantasies about the merging of humans and machines. In the popular press, computers were likened to 'electronic brains,' their circuitry to the human nervous system, and the emergence of humanoid cyborgs was treated as imminent.

With the material and categorical limits between things unmattered in this way, there was little to stop computation from stretching out across new fields, domains, and entities. And stretch it did. The world's first general purpose digital electronic computer, the ENIAC, was unveiled to the public in February of 1946. Already by the early 1950s, the US government was pouring exorbitant resources into a hugely ambitious continental missile defense system called SAGE, which relied on new digital electronic computers to help detect and intercept incoming ballistic threats (the Cold War and its attendant military paranoia was by this point in full swing). By the 1960s, Secretary of Defense Robert McNamara--who had previously served as VP of Operations at the Ford Motor Company, where he championed the principles of scientific management, prefiguring what we've come to call data-driven decision making--was pushing the US military establishment to fund a range of computational modeling initiatives in support of his counterinsurgency campaign (a euphemism for imperial war of aggression) in Vietnam.

McNamara's DoD had counterparts in the private sector, like the Simulmatics Corporation. Generally regarded as the world's first data analytics firm, Simulmatics parlayed early contracts running voter preference studies for the Kennedy presidential campaign into field offices in the former Saigon, where they offered their computational analysis services up to the failing war effort.

SAGE never really worked, the US's war in Vietnam was a world-historical human and ecological catastrophe, Simulmatics eventually collapsed, and some of its top researchers have since been denounced as war criminals. None of this did much to slow the creeping generalization of computation as a framework for conceptualizing the riotous, unruly plenty of human and earthly existence.

In the decades since, the cultural and social shape of computation has changed. It been made to appear more personal, more accommodating, and more homely. The devices through which it moves are much smaller, much more durable, and vastly more powerful. It has settled into all the smallest and most intimate corners of our lives, plain and mostly quiet.

That it has proven so protean and adaptable, though, should tell us something about how little its basic premise has changed. Friendship and kinship, labor and work, creative practice, governance and law: all of these are equivalent and ripe for computational optimization when treated as strictly informatic processes rather than as worldly things with material stakes: histories, politics, cultural gravity.

This sense of quiet, friction free ubiquity--always a fiction, carefully curated and dutifully tended--has lately been abandoned. The possibilities of computation, whatever they might be, are today monopolized by an especially malignant class of braying idiot whose only interest seems to be the at-all-costs exploitation and debasement of human life and intellect, of the planet and its resources, of whatever beautiful things we have left. These people are barely even deserving of scorn; to give them something so fully human as anger seems profane (but we use the tools we have).

But we should at least realize that in so openly tying their monopoly power over computation to the devaluation of human thought and creativity (via what we have learned to call generative AI), to authoritarian domination, to the rehabilitation of empire, and to the most vile forms of racism, they are in some sense just saying the quiet part out loud.

They are boasting about their inheritance.

Image: Cpl. Irwin Goldstein (foreground) sets the switches on one of the ENIAC's function tables at the Moore School of Electrical Engineering. (U.S. Army photo)